Blog

Welcome to the section of Sabal Food Safety Consulting's web page dedicated to brainstorming discussions about food safety and related topics. Feel free to leave your comments or opinions about the topics under discussion. Should you enconter any difficulty using the Blog, send us an email to This email address is being protected from spambots. You need JavaScript enabled to view it. so we can investigate the problem.

Over here you'll see all publicly shared articles. Should you want to leave a comment on any article, click on the title and another window will open with the article and, at the bottom, there is a section where you can share your comments.

You could also register as a member and you'll receive notifications when new articles are published and, you'll have access to the articles developed only for registered members. Registration is free.

It is nice to have you here!

Preventive Controls & HACCP Integration

November 2015

November 2015

Preventive Controls and HACCP Integration

Almost everyone in the food & beverage industry is concerned with the implementation of the new FDA’s “Food Safety Plan” and with the development and implementation of the “Preventive Controls”. From my point of view, the greatest worry is: how to integrate current food safety programs, where HACCP is the main component for the analysis of the risks of food safety hazards, with the FDA’s “Food Safety Plan”?

In my opinion, the core of the integration process greatly relies on the analysis of the risks; the difference between a hazard that requires a critical control point and a hazard that requires a preventive control is their risk.

When reading 21 CFR 117 - Current Good Manufacturing Practice, Hazard Analysis, and Risk-Based Preventive Controls for Human Food, Section 117.3 – Definitions, the definition of a “hazard requiring a preventive control” is: “a known or reasonably foreseeable hazard for which a person knowledgeable about the safe manufacturing, processing, packing, or holding of food would, based on the outcome of a hazard analysis (which includes an assessment of the severity of the illness or injury if the hazard were to occur and the probability that the hazard will occur in the absence of preventive controls), establish one or more preventive controls to significantly minimize or prevent the hazard in a food and components to manage those controls (such as monitoring, corrections or corrective actions, verification, and records) as appropriate to the food, the facility, and the nature of the preventive control and its role in the facility's food safety system”.

As the FDA also developed the guidance document for the implementation of the HACCP method for seafood products under 21 CFR 123 – Fish and Fishery Products, I’m going to use this as reference for definitions for HACCP and to compare with the preventive controls rule. A “significant hazard” is a hazard that is “reasonably likely to occur”. The definition of a food safety hazard is: a hazard that is reasonably likely to occur and “one for which a prudent processor would establish controls because experience, illness data, scientific reports, or other information provide a basis to conclude that there is a reasonable possibility that it will occur in the particular type of fish or fishery product being processed in the absence of those controls.”

Similarities. Preventive controls and Seafood HACCP regulations require: 1) To have a person that is knowledgeable about food safety hazards, the products processed and developing control measures. 2) To implement measures to control those hazards with risks based on severity and probability (risk). 3) Those measures will be implemented because, “in the absence of those controls”, the hazards identified will cause illness or injury. 4) Even though it’s not part of the definitions, hazards from incoming goods (raw material, ingredients, processing aids, packaging material, etc.) and hazards from the process must be analyzed in both cases.

Difference. In the preventive control rule, a control measure must be implemented whenever “a known or reasonably foreseeable hazard” is identified. In the Seafood HACCP regulation, a control measure must be implemented whenever there is a hazard that is “reasonably likely to occur”. However, there is no indication on how to measure the risk of “a known or reasonably foreseeable hazard” or the risk of a hazard that is “reasonably likely to occur”.

With this in mind, the tool that will be used to measure the level of risk is what will represent the biggest challenge to all individuals responsible for the analysis of the risks of the food safety hazards in any product/process. This tool should provide a simple but thorough description of the different levels of severity and likelihood of the hazards. I believe there is also a good opportunity to add to this risk analysis tool another variable: detection. Whenever food safety hazards are analyzed, the risk of those should be adequately assessed by determining the severity, likelihood and detection of the hazards.

What is the severity of a food safety hazard? Is the consequence that will produce. It is normally referenced as death, illness or injury. What is the likelihood of a food safety hazard? It’s the chance that the hazard identified may happen during operations. What is the detection of a food safety hazard? Is the ability to detect the hazard.

In the World Health Organization / Food and Agriculture Organization of the United Nations document titled “Risk Characterization of Microbiological Hazards in Food”, there are several methods to determine the level of risk and each method has its own pros and cons, mostly associated with the level and deepness of the knowledge and skills of the individual(s) performing the analysis of the risks to food safety.

Whatever is the method used to determine the level of risk of all hazards in a food safety system, the “qualified individual” must have very good skills on how to apply sound scientific knowledge to be able to comply with the preventive controls rule while maintaining the HACCP program in place.

The value of a person

My brother recently sent me a link to see a short video. The video was taken during Victor Küppers’ presentation organized by TEDx Andorra La Vella and the topic was "The Value of a Person". I was delighted with the content and I would like to summarize his presentation and use it as an introduction to the attitude towards food safety.

Victor explains that the value of a person can be expressed by a simple formula: V = (K + S) x A, where V is the value of a person, K is the knowledge, S are the skills and, A is the attitude. For anyone with train-the-trainer background, this sounds very familiar.

The objective of the presentation was to highlight the importance of the attitude of a person. Victor explained that the attitude is multiplying the sum of K and S! You make a difference in your environment because of your attitude. You influence people with your attitude. Knowledge and skills are not only necessary but crucial factors. However, we select our friends by their attitudes…we don’t ask our friends for their Curriculum Vitae’s to determine if they will become our friends or not.

So, how does the attitude of a person correlate with food safety? The life of the consumers of food products is in the hands of the companies manufacturing them.

We all need knowledgeable and skillful individuals to work processing or manufacturing the products we consume. The training of these individuals must be effective and there must be a way to measure how knowledgeable and skillful they are.

For the knowledge, a test or a meeting to discuss the content of the training is satisfactory as long as the trainer, who must be knowledgeable on the topic, evaluates the results. Now, tests do not assess the effectiveness of the skills of people, only the knowledge. A solid training program must include an evaluation of the skills required to safely work in a food processing environment.

The last component of Victor’s formula is attitude. How can we determine if a person working in food processing has the right attitude? This may not be easy; however, not impossible.

The Bell curve is mentioned often when anyone wants to assess probability of distribution. Any method used to determine the distribution of the value of a person in the food industry could be plotted in a Bell type curve. The results would be analyzed to determine potential risk from employees to the food manufacturing operations.

Generically, any Bell type curve has three zones. The center is the average, one of the sides is below average and the other side is above average. Employees’ value falling in the average, or above it, represents low risk to food manufacturing or processing operations. However, those employees that fall into the “below average” side of the curve represent a high risk, a significant hazard.

In a HACCP environment, what do we do whenever we have a significant hazard? We have a critical control point for which we identify a critical limit, continuously or frequently monitor the limit to make sure we remain in the acceptable range, we develop corrective actions, verification procedures, and record-keeping.

Senior management of any food facility is responsible to understand the hazards in the process and provide resources to acquire knowledgeable and qualified individuals that will be directing and controlling food processing or manufacturing operations, also known as: human resources. Senior management is also responsible for the effective implementation of the training program in any food facility.

Senior management is responsible to find a way to determine the value of all employees and implement corrective and preventive actions according to the risk the employees present.

Audits and Auditors - Facts and Myths

Sabal Food Safety Consulting / August 6th, 2014

Food safety hazards, the human element

November 2014

November 2014

When we look at the definitions and methodology of the HACCP System, we will, eventually understand that there are three basic types of hazards: biological, chemical and physical.

When implementing a HACCP System, prerequisite programs (GMPs) must already be in place and will be used by the HACCP System to “prevent, eliminate or reduce hazards to an acceptable level”. I’m borrowing this definition from the HACCP System and applying it to prerequisite programs.

Anyone responsible for the development and implementation of a HACCP System will identify specific hazards from the three available types (biological, chemical and physical) and will perform a risk analysis based on the severity and likelihood of the hazards to determine how significant they are. This process will be fundamental when identifying critical control points or preventive controls (FSMA).

What we are missing is a type of hazard that, by nature, may always be considered “Significant”, and this hazard is the “human element”.

For example, by looking at current statistics of recalls, the number one reason for recalls is allergen control. Whenever we have allergens in our facility/process, they show up in our HACCP Program as chemical hazards.

However, by using a “root cause analysis”, the main reason why allergens are cause number one for recalls is the “human element”. Why?

People is responsible for identifying the allergenic agents in food, include them in the HACCP program, handle them inside the facility, develop labels that include the allergens used and review those labels frequently and, cleaning all food contact surfaces to prevent cross-contamination. The only way this is effectively accomplished is by having “qualified individuals”, as defined by FSMA. This is training.

Here is where the effectiveness of the “training program” comes into play. People must be trained to:

1) Obtain the knowledge required to develop and implement a food safety system.

2) Start using the knowledge and converting it to skills. This is experience.

3) Have the right attitude towards the job, responsibilities and activities to be performed. This is inherent to each person, as well as the ability to be tolerant to other opinions. This is where the human element plays a big role as a food safety hazard.

Training requirements are in the HACCP regulations as well as in the Good Manufacturing Practices” regulation. Employees must be trained.

The challenge: measure the effectiveness of the training including the attitude of personnel towards the food safety program.

What is a system?

Sabal Food Safety Consulting's blog

May 25th, 2014

- The System belongs to the company! Not to the individuals that develop it…

-

A system is based on documentation! If it is not documented,

- How can it be used for training? Because, If training depends on the trainer’s knowledge, trainings will always be different…

- How it can be audited?

- How it can be verified?

- How it can be validated?

-

Food Safety Systems use the ISO structure of a quality system (Management commitment, management reviews, corrective actions, internal audits, etc), plus:

-

Main component: a HACCP Program

- Which is why having HACCP training is a pre-requisite for anyone developing a Food Safety System

-

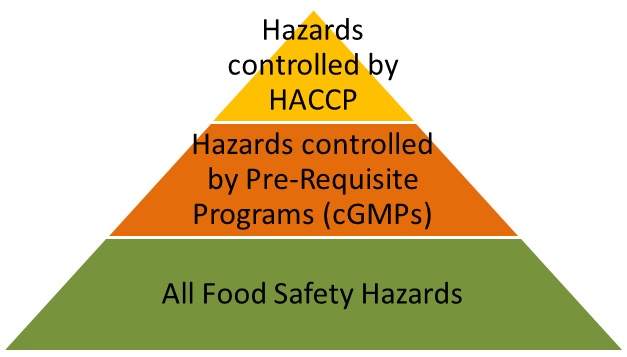

Hazards controlled by the HACCP Program:

- Related to the process

- Inherent to the ingredients and finished products

-

Supporting components: pre-requisite programs based on GMP’s

- Hazards controlled by the pre-requisite programs:

- Related to the internal and external environment (Outside grounds, plant construction and design, lighting, sanitary operations, pest control, etc).

- Related to the equipment, tools and utensils.

- Related to the personnel (clothing, jewelry, disease control, visitors, personal cleanliness, etc).

-

Main component: a HACCP Program